Posts Tagged ‘culture’

Stress or Pressure?

This post is a verbatim reprint from a book I wrote with Marco Iansiti of Harvard Business School, One Strategy: Organization, Planning, and Decision Making (Smile link). The original content was from a Microsoft internal blog post dated April 23, 2008. More context is available in the book (Google Books link). Posts were written for the Windows team but available to the whole company at the same time.

One of the things that is really important to me is making sure working on Windows and Windows Live is a low-stress job. Stress is evil, in fact stress is defined as:

One of the things that is really important to me is making sure working on Windows and Windows Live is a low-stress job. Stress is evil, in fact stress is defined as:

stress: strain felt by somebody: mental, emotional, or physical strain caused, e.g. by anxiety or overwork. It may cause such symptoms as raised blood pressure or depression.

The thing about stress is that it is both physical and emotional. Stress is all about a loss of control (anxiety). Loss of control comes from not really knowing the goals, not understanding what success looks like, and in our vernacular, about being random. Stress comes because the work required is incompatible with your capabilities or your view of success. Stress is about a mismatch between your reality and the reality of your manager or team.

Stress in the workplace is 100% incompatible with building great software.

On the other hand, pressure is all around us. We have pressure to succeed. Pressure to get the build right. Pressure to get the design right. Pressure to go live with content. Pressure is a motivator. Pressure is defined as:

pressure: urgency, as of affairs or business

The thing about pressure is that it comes from within. Pressure is about the plan. Pressure is about your own goals (affairs). By operating with a plan and the details of that plan were created by the team we transform what might be stress into pressure. Pressure comes because you want to be successful against the goals you have set out. Pressure comes because the peers you depend on are expecting you to deliver what was communicated. Pressure is about the constant force in our environment to deliver on the plan we developed together.

Pressure in the workplace is how we stay on our toes and put forth our best efforts. Performing under pressure, while challenging, is what helps us as engineers to make great choices and use the constraints to our (and our customers) advantage.

No one works well under stress—the physical toll is real and provable. Some folks don’t work well under pressure. You don’t have to put yourself under pressure, but we’re a competitive company and like a great athletic team we do want that effort to go above 100%, but we can do so in a constructive way by using pressure to our advantage.

We’ve got some pressure going on now on our team. IE 8 in the final milestone. Integrating the M1 build of Windows Live. Windows 7 moving to M3. We’re excited. The pressure is real. It is pressure like being in the World Cup because we know what got us here and we know what it takes to be successful.

—Steven Sinofsky

PS: Yes these words are similar. The beauty of words are the subtle differences that make them special.

PPS: I’m just excited to use the new build of LiveWriter – and the whole Wave 3 suite!

Privacy and Security: Less Rhetoric and More Product

Tim Cook’s recent remarks on privacy described as “blistering” or an “epic subtweet” amplified a discussion about the web and privacy. Given the polarizing framing of this topic, collectively as an industry (and beyond) we have been less than stellar at discussing these topics. We’ve also done a poor job at proposing broad initiatives to address concerns raised in the discussion.

Tim Cook’s recent remarks on privacy described as “blistering” or an “epic subtweet” amplified a discussion about the web and privacy. Given the polarizing framing of this topic, collectively as an industry (and beyond) we have been less than stellar at discussing these topics. We’ve also done a poor job at proposing broad initiatives to address concerns raised in the discussion.

We seem to be caught in that difficult situation of having defined the problem as requiring an all-or-nothing solution, which is never a good place to be because the reality is more nuanced. Dustin Curtis points out the nuance in this post Privacy vs. User Experience.

Rather than debate extremes that are neither desirable nor technically possible, I want to suggest there are technical problems that can and should be solved, and doing so would make the Internet a better place for people using Internet services and businesses providing services.

“Get Over It”

Way back before there were mobile phones, today’s search engines or social networks, or cloud computing, Sun Computer founder Scott McNealy said, “You have zero privacy anyway. Get over it.” Yikes!

The statement at the time had elements of truth, fear, and absurdity. It was pre-bubble, heck it was the 1990’s still and 1984 was still fresh on our collective psyche. The statement did however foretell a significant change in what was going to happen.

Such a debate is not new. While the scale is different, I recall three major products from reputable companies that introduced me to the absolutes and polarization of the privacy “debate”.

Robert Bork was nominated for the Supreme Court of the US in 1987 (and later failed confirmation in the Senate). One of the moments of the very contentious confirmation was the appearance of the nominee’s personal records from a video rental store (delivered to the press as a hand-written list). This was clearly a dubious act later codified to be illegal. I think for my generation, it was the first experience of how things would change in the digital age of record keeping via computer. At the time there were quite a few connections made to how the FBI maintained files on people, but this was the first time the “incriminating” information came from a benign consumer business.

Lotus Marketplace was a product developed in the late 1980s. It had the gall to collect data sources like US Census data and public phone number listings and put it on CD ROMs for marketing people to use with Lotus 1-2-3 to plan and analyze marketing campaigns. Even worse it had household and zip code level data about the US (all based on sources already in existence and available to businesses. Much debate centered on how one could take this data and potentially “triangulate” it to actually learn something about an individuals. Likely due to the massive outcry, the product was never released to the market. From this early experience we can see the combination of an existing data source and distribution of digital data changes the dynamic of privacy.

Credit card companies became famous for the offers inside your monthly statement in the 1980s—little paper inserts with offers to buy custom return address labels, go on cruises, or secure other financial products. Like confetti they would fall out of the envelope. These were the very definition of “junk mail”. Then the companies began to use your previous purchase history to target these inserts. If you were paying attention then you realized that junk mail started to look less and less junky. This “feature” turned into a fear that credit cards were selling your charging history to random companies. Of course that was not true (in fact such information was closely guarded). The way they worked was the credit card company would offer inserts matching specific target customers and insert them for a fee. Because financial companies were already tightly regulated, the path to today’s Byzantine opt-in/opt-out direct mail policies can be traced to this history.

Fast-forward to today and we know that the services we use amass significant information about how we interact with them. The medical establishment has my medical history available to a constellation of caregivers (and to me) that make delivery of quality care easier and faster. Credit card companies know my charging history and patterns and can alert me to fraud instantly (even if too often incorrectly). Netflix knows all the movies I watch (and even how much of them) and uses that to improve a highly valued recommendation engine. Pizza delivery services know what we order and can save time and effort by using that history (and also offer promotions based on that). Google Maps knows where I travel and when and proactively offers suggestions on when I should depart depending on current traffic. The examples are endless. In fact the benefits of maintaining my history of interaction with a service are immense and a deciding factor in which services I choose to use.

The risk that we have assumed on an individual service is that providers cannot maintain the integrity of their own services. This is a network technology risk as we have seen with Target or Home Depot. It is a human risk as we have seen with breaches like Sony where people set out to arbitrarily harm others. It is a national security risk such as we have seen with the recent attack on the federal government in the US, allegedly orchestrated by a nation-state.

The risk that a company will do “bad” things with the data it has as a result of using a service by all appearances is infinitesimal. Will some features feel creepy to some? Of course that is the case. Some people don’t like having their name and order remembered by the barista (a human form of big data).

The risk that a company will be breached and the data put to uses not intended by the company is not only there, but it is significant. This is a technology problem our industry needs to solve. One thing is clear and it is that the biggest companies are the biggest targets and the largest technology companies are (I would assert) the most savvy and adept at these issues. But the problem is incredibly difficult in a world of nation-states leading some attacks.

I am not a “get over it” person, but rather an engineer and product person that sees the desire of companies to use data to deliver far better services and that desire is leading to immense innovation in how commerce is conducted and the internet is used. At the same time this data is very attractive to bad actors for a variety of reasons and that is a technical challenge our industry will rise to as it has time and time again. This is first and foremost a security problem due to bad actors. The privacy challenges come from what happens with the data when used by good actors in the system and this is a much more nuanced challenge.

I do believe that if you simply want to skip using services that compile data then you should be able to opt-out of services or to simply choose to use alternative services, but there is no obligation for any given service to provide the non-personalized, non-targeted, non-historic version of a service. The market for such services is likely to shrink and that might be unfortunate. The free market is like that. Sometimes something highly valued at a point in time becomes non-economic or scarce as companies compete for a larger market. I don’t have an easy answer for customers that want to use the internet without a trail—I strongly strongly support the services and technologies that allow for that (encryption, tor, etc.). I think the evidence is that this is not where most people will go. Historically, if there is money to be made then businesses will be created to seek that opportunity.

But What About Web Privacy

Why all the kerfuffle over privacy, again? My view is that this is rooted in the experience of the web that is just getting worse and many are frustrated. Security breaches of private information compound this concern and are symbolic of technology challenges on the Internet. Security breaches are unrelated to privacy in the sense that breached systems are not ad-funded user profiles, but wholly orthogonal and essential line of business information. The challenge is that our collective experience on the web is the result of a mountain of technologies built out over the past 20 years in an effort to deliver services to consumers that are paid for by advertising.

The act of delivering services paid for by advertising is not only inherently good and beneficial, but also essential to the amazing spread and growth of internet services. It should be readily apparent that the rise of internet advertising supported services is singularly responsible for the mass scale growth of billion-customer services. That is only good.

It isn’t that all my data is in the cloud waiting to be mined—AT&T, Comcast, Blockbuster, American Express, Nordstrom, Safeway, Amazon, UPS, and more already had a crazy amount of information about me and I would love exactly none of that to be in the hands of a bad actor. Even Apple knows every song I ever bought (if this happens to leak, I am saying now that I bought Barry Manilow Live for a friend’s birthday party), every place I ever used Maps to visit, and all my mail and contacts. Google has much of this too. I know Google is not selling my name and that information to anyone, but like a credit card insert they will match an advertisement for services to “people who visit New York”.

The challenge is that in an effort to improve the revenue yield of services all too often technology solutions available were used, abused, or otherwise misused in ways that degraded the overall experience of the internet for too many. The problem is that web ads are awful experiences and getting worse. We need technical solutions to this 20-year pile of legacy features.

My view is that the horrible experience of browsing the web and seeing those ads that “interfere” with using the web, and fear that this experience will become what we all experience on the pristine world of mobile is at least partially and likely largely responsible for using privacy as the anchor of this debate. In Steve Jobs’ “Thoughts on Flash” he was completely accurate about the problems of the runtime. That runtime was used as the basis of ads. He may or may not have been against ads, but many people were quite frustrated by the technical execution of ads in Flash and so his appeal resonated. It is just few of us could do much about it.

The industry did not stand still. Over the years we have seen browsers add pop-up blocking. While advertisers were angry, people cheered. We’ve seen a dramatic rise in ad-blockers. Yet we still see a constant stream of complaints on the web about “wait 5 seconds to see your story” or user experiences that test even the most savvy gamer when it comes to finding and clicking the close box. But this is the technology choice on the web, not the nature of advertising itself.

Fixing the Web

I was a strong supporter of evolving the browser to support features that allowed consumers to choose how to secure their experience. From popup blockers to Do Not Track I advocated for this type of control. The reason was not because I am against “free” services or want everyone to browser anonymously without footprints. The reason is because the web got so messy that the recourse seemed to be to help people as individuals.

On a personal note, championing features like Do Not Track (DNT) was one of the more educational chapters in my own career. I had never experienced the “slippery slope” defense quite like that—the idea that a feature was just an on-ramp to the apocalypse would not overstate the reaction to such a feature. The argument against DNT was that overnight the free internet would vaporize, which was also the argument against popup blockers or the removal of Flash.

There are three parts of today’s web that are technology problems waiting for solutions. The solutions are either difficult or undesirable but I believe solving them would go a long way towards framing the debate as a choice between “selling your personal information” or “there will be no services on the Internet”.

¶ Ads are awful. First and foremost, today’s ad formats are relatively hostile to consumers using services. We all know that ads want to be noticed. That’s a given. The openness and programmability of HTML5 and browsers created an open season on the technology used in ads and while there is plenty of innovation there are more negatives. Even the biggest and most popular sites can grind a browser to a halt on a powerful desktop PC. With television, for years advertisers tried tricks like raising the volume of an ad in order to get you to notice. This was fixed in the US by government regulation in 2012 with the CALM Act. We need such a movement for the internet. I believe the presence of advertising networks and internet standards bodies already in place (that create standard ad formats) could easily create standards around fly overs, popups, tiny close boxes, interstitial timings, audio and video playback, and so much more. We of course don’t want to stifle innovation, shut off A/B testing, or otherwise become the government but certainly we can create better technology and designs for advertisement. The popularity of browser based ad blockers is not about “privacy” but just about a desire to read more stuff and use more services in some reasonable way, I would assert.

¶ Content responsibility is lacking. One of the biggest places where advertising meets real-world security concerns is when advertisements themselves are vectors for security exploits. The advertising networks of today are well-known repositories for the distribution of zero-day exploits and malware insertion. While one could fault the browsers for not being able to secure against this, one must also fault the ad networks for allowing this content in the front door. One can also fault the sites that host this. As a consumer visiting a site, neither the site nor the ad network act responsibly relative to this type of content. All will do takedowns but the effort to own up to this challenge is not what I think it could be (there’s plenty of hard work, but not enough). Ad formats themselves are part of the challenge. Should ads be allowed the full power of the browser and runtimes? Should we define a maximal set of capabilities that ads can use? There is lots to be done here.

¶ Accountability is non-existent. While we were working on “Do Not Track” one of the things that surprised me the most was the lack of accountability for some core information about people that I believe is a privacy challenge. Some have talked about this as the problem with cookies (again a polarizing way to describe something since I also like not having to sign into services I use all the time on my home PC again and again). But mostly this is probably the most unsavory part of the web when it comes to this issue of privacy and security. Quite simply, once I start using a site, especially if I am logged in, then the ability for that site to see and store my internet traversal history is just too easy. The accountability for this is nowhere to be seen. Even the most trustworthy of sites are in need of improvement along these lines. When I visit nytimes.com (just an example of an incredibly reputable site) I am visiting dozens and dozens of other web sites just on the home page. Below you can see a portion of the Web Page Privacy view from Internet Explorer showing all the URLs that compose a page. This is quite a surprise to most people who think that the links might go to a few photos or to some other servers for code or features. These are not subdomains of nytimes.com but whole other sites (honestly, do I really trust the domain ru4.com, which by the way resolves to “Perfect Privacy Incorporated” but has no home page or corporate information page). What are the privacy policies of these sites? What information do they get? Do they combine this information across their customers? It isn’t that they do this, it is that as a consumer I have no knowledge and as a site I visit the Times offers no transparency. This to me feels like a big challenge. I don’t mind the Times having my browsing history for the Times, not at all especially if I am logged on. I do mind all these companies with mysterious URLs following me around. When a company has my transaction history and uses it to deliver services it does not send that history to others, it has others send information to them to use the history. Why can’t sites implement ads and analytics in this manner? You can see the lack of accountability in the terms of service, as shown for the nytimes.com site below.

Solutions are on the way

I believe there are deep concerns that the mobile internet will devolve into the desktop internet and we will lose the clean slate we currently have. This would be a shame because we need ad-supported services on mobile as well. We know the current experience of using a browser on mobile is racing towards the desktop—ironically because the browsers are getting better with video, script and runtime support and more.

The recent announcements by Facebook and partners show how innovation can happen. By providing a mechanism for ad-supported content to appear within a Facebook app experience natively and in a format that does not (necessarily) support many of the bad practices of the desktop web my view is that advertising can be more natural and at the same time relevant. My hope is that the runtime and/or the policies do not support the arbitrary nature of the desktop web and the experience does improve dramatically and stay that way for a while.

While all of this is taking place in the consumer world, the business computing landscape is being altered by the encroachment of consumer services. The natural reaction of the enterprise is to disable or turn off this access, which many believe is a losing strategy. In this context the biggest concern I would bring is the notion that the business internet will live along side the consumer internet and all will be good. As a consumer when I use my mobile device for work and personal, I want that conflation to exist in the service data I use. If I buy books for my own personal interest but use them for work or even get reimbursed by work, then I want my Amazon profile to have that. If I use Maps to navigate to partners or customers I don’t wan to sign on and sign off or use a different Maps instance, but want to train a single instance. If I use a productivity tool for home and work, I want the usage and quality data to flow to the service so that the product gets better for how I use it. In all cases, the unified view of “me” makes everything I use better and that makes me a better employee using tools. I’d hate for IT to see privacy as another thing to enforce by degrading my experience.

¶ Ultimately, the web will continue to evolve and free services will continue to grow. That is super important to the future for the next 3 billion internet users. There will always be a distribution of views of how much information should be saved and what services will be valued. We should no judge each other on that any more than we can expect every service to cater to ever perspective on this topic. For a moment, we can look at the challenges we’re discussing and see the engineering and product development work that can be done. I believe we can collectively improve the current situation if we take steps to design new products and services that meet the needs of all parties.

# # # # #

Africa’s Mobile-Sun Revolution

Solar panels are a part of the landscape in southern Africa. Here, two boys ham it up for the camera while playing around a large-form factor panel.

The transformative potential for mobile communications is upon us in every aspect of life. In the developing world where infrastructure of all types is at a premium, few question the potential for mobile, but many wonder whether it should be a priority.

Note: This post originally appeared in Re/code on April 29, 2015.

Many years of visiting the developing world have taught me that, given the tools, people — including the very poor — will quickly and easily put them to uses that exceed even the well-intentioned ideas of the developed world. Poor people want to and can do everything people of means can do, they just don’t have the money.

Previously, I’ve written about the rise of ubiquitous mobile payments across Africa, and the work to bring free high-speed Wi-Fi to the settlements of South Africa. One thing has been missing, though, and that is access to reliable sources of power to keep these mobile phones and tablets running. In just a short time — less than a year — solar panels have become a commonplace site in one relatively poor village I recently returned to. I think this is a trend worth noting.

Could it be that solar power, potentially combined with large-scale batteries, will be the “grid” in developing markets, perhaps in the near future? I think so.

It is also the sort of disruptive trend we are getting used to seeing in developing markets. The market need and context leads to solutions that leapfrog what we created over many years in the developed world. Wireless phones skipped over landlines. Smartphones skipped over the PC. Mobile banking skipped over plastic cards and banks.

Could it be that solar power, potentially combined with large-scale batteries, will be the “grid” in developing markets, perhaps at least in the near future? I think so. At the very least, solar will prove enormously useful and beneficial and require effectively zero-dollar investments in infrastructure to dramatically improve lives. Solar combined with small-scale appliances, starting with mobile phones, provides an enormous increase in standard of living.

Infrastructure history

Historically, being poor in a developing economy put you at the end of a long chain of government and international NGO assistance when it comes to infrastructure. While people can pull together the makings of shelter and food along with subsistence labor or farming, access to what we in the developing world consider basic rights continues to be a remarkable challenge.

For the past 50 or more years, global organizations have been orchestrating “top down” approaches to building infrastructure: Roads, water, sewage and housing. There have been convincing successes in many of these areas. The recent UN Millennium Development Goals report demonstrates that the percentage of humans living at extreme poverty has decreased by almost half. In 1990, almost half the population in developing regions lived on less than $1.25 a day, the common definition of extreme poverty. This rate dropped to 22 percent by 2010, reducing the number of people living in extreme poverty by 700 million.

Nevertheless, billions of people live every day without access to basic infrastructure needs. Yet they continue to thrive, grow and improve their lives.

This UN Millennium Development Goals infographic shows the dramatic decline in percentage of people living under extreme poverty. (United Nations)

While the efforts to introduce major infrastructure will continue, the pace can sometimes be slower than either the people would like or what those of us in the developing world believe should be “acceptable.”

A village I know of, about 10 miles outside a major city in southern Africa, started from a patch of land contributed by the government about six years ago, and grew to a thriving neighborhood of 400 single-family homes. These homes are multi-room, secure, cement structures with indoor connections to sewage. The families of these homes earn about $100-$200 a month in a wide range of jobs. By way of comparison, these homes cost under $10,000 to build.

While the roads are unpaved, this is hardly noticed. But one thing has become much more noticeable of late is the lack of electrical power. Historically, this has not been nearly as problematic as we in the developing world might think. Their economy and jobs were tuned to daylight hours and work that made use of the energy sources available.

Solar-powered streetlights have been installed recently — here under construction — increasing public safety and providing light to the community.

Several finished homes around a nearly complete streetlight installation that also illuminates a drinking-water well, enabling nighttime access to water.

In an effort to bring additional safety to the village, the citizens worked with local government to install solar “street lights,” such as the one pictured here. This simple development began to change the nighttime for residents. These were installed beginning about nine months ago (as seen in the first photo, with a closer to production installation in the second).

Historically, this type of infrastructure, street lighting, would come after a connection to the electrical grid and development of roads. Solar power has made this “reordering” possible and welcome. Lighting streets is great, but that leads to more demands for power.

Mobile phones, the new infrastructure

These residents are pretty well off, even on relatively low wages that are three to five times the extreme poverty level. While they lack electricity and roads, they are safe, secured and sheltered.

One of the contributors to the improved standard of living has been mobile phones. Over the past couple of years, mobile phone penetration in this village has reached essentially 100 percent per household, and most adults have a mobile.

The use of mobiles is not a luxury, but essential to daily life. Those that commute into the city to sell or buy supplies can check on potential or availability via mobile.

Families can stay connected even when one goes far away for a good job or better work. Safety can be maintained by a “neighborhood watch” system powered by mobile. Students can access additional resources or teacher help via mobile. Of course, people love to use their phones to access the latest World Cup soccer results or listen to religious broadcasts.

All of these uses and infinitely more were developed in a truly bottom-up approach. There were no courses, no tutorials, no NGOs showing up to “deploy” phones or to train people. Access to the tools of communication and information as a platform were put to uses that surprise even the most tech-savvy (i.e., me). Mobile is so beneficial and so easy to access that it has quickly become ubiquitous and essential.

Last year, when I wrote for Re/code about mobile banking and free Wi-Fi, I received a fair number of comments and emails saying how this seemed like an unnecessary luxury, and that smartphones were being pushed on people who couldn’t afford the minutes or kilobytes, or would much rather have better access to water or toilets. The truth is, when you talk to people who live here, the priority for access unquestionably goes to mobile communication. In their own words, time and time again, the priority is attached to mobile communications and information.

Fortunately, because of the openness most governments have had to investments from multinational telecoms such as MTN, Airtel and Orange, most cities and suburban areas of the continent are well covered by 2G and often 3G connectivity. The rates are competitive across carriers, and many people carry multiple SIMs to arbitrage those rates, since saving pennies matters (calls within a carrier network are often cheaper than across carriers).

Mobile powered by solar

There has been one problem, though, and that is keeping phones charged. The more people use their phones (day and night), the more this has become a problem. While many of us spend time searching for outlets, what do you do when the nearest outlet might be a few miles away?

It is not uncommon to see one outlet shared by many members of a community. This outlet is in the community center, which is one of a small number of grid-connected structures. Note the variety of feature phones.

When there is an outlet, you often see people grouped around it, or one person volunteers to rotate phones through the charging cycles. Above a picture of an outlet in the one building connected to power, the community center. This is a pretty common sight.

Small portable solar panels can serve as “permanent” power sources when roof-mounted. You can see the extension wire drawn through the window.

An amazing transformation is taking place, and that is the rise of solar. What we might see as an exotic or luxury form of power for hikers and backpackers, or something reasonably well-off people use to augment their home power, has become as common a sight as the water pump.

The plethora of phones sharing a single outlet has been replaced by the portable solar panel out in front of every single home.

An interesting confluence of two factors has brought solar so quickly and cheaply to these people. First, as we all know, China has been investing massively in solar technology, solar panels and solar-powered devices. That has brought choice and low prices, as one would expect. In seeking growth opportunities, Chinese companies are looking to the vast market opportunity in Africa, where people are still not connected to a grid. There’s a full supply chain of innovation, from the solar through to integrated appliances with batteries.

Second, China has a significant presence in many African countries, and is contributing a massive amount of support in dollars and people to build out more traditional infrastructure, particularly transportation. In fact, many Chinese immigrants in country on work projects become the first customers of some of these solar innovations.

People are exposed to low-cost, low-power portable solar panels and they are “hooked.” In fact, you can now see many small stores that sell 100w panels for the basics of charging phones. You can see solar for sale in the image below. I left the whole store in the photo just to offer a bit of culture. The second photo shows the solar “for sale” offers.

A typical storefront in this community, selling a variety of important products for the home. Solar panels are for sale, as indicated by the signs in the upper left.

Detail from the storefront showing the solar panels for sale. There is a vibrant after-market for panels, as they often change hands, depending on the capital needs of a family.

Like many significant investments, there’s a vibrant market in both used panels and in the repair and maintenance of panels and wiring. Solar is a budding industry, for sure.

But people want more than to charge their phones once they see the “power” of solar. Here is where the ever-improving and shrinking of solar, LED lights, lithium batteries and more are coming together to transform the power consumption landscape and the very definition of “home appliances.”

In the developed world, we are transitioning from incandescent and fluorescent lighting in a rapid pace (in California, new construction effectively requires LED). LED lights, in addition to lasting “forever,” also consume 80 percent less power. Combining LED lights, low-cost rechargeable batteries and solar, you can all of a sudden light up a home at night. Econet is one of the largest mobile carriers/companies in Africa, and has many other ventures that improve the lives of people.

Here are a few Econet-developed LED lanterns recharging outside a home. This person has three lights, and shares or rents them with neighbors as a business. Not only are these cheaper and more durable than a fossil-fuel-based lantern, they have no ongoing cost, since they are powered by the sun.

Several modern, portable, solar-powered LED lamps sold at very low cost by mobile provider Econet. The owner rents these lamps out for short-term use.

With China bringing down the cost of larger panels, and the abundance of trade between Africa and China, there’s an explosion in slightly larger solar panels. In fact, many of the homes I saw just nine months ago now commonly sport a large two-by-four-foot solar panel on the roof or strategically positioned for maximal use.

These two boys were hanging out when I walked by, and quickly chose a formal pose in front of their home, which has a large permanent solar panel mounted on the roof.

Panels are often on the ground, because they move between homes where the investment for the panel has been shared by a couple of families. This might seem inefficient or odd to many, but the developing world is the master of the shared economy. Many might be familiar with the founding story of Lyft based on experiences with shared van rides in Zimbabwe, Zimride.

A trio of medium-sized solar panels strategically placed outside the doors of several homes sharing a courtyard.

Just the first step

We are just at the start of this next revolution at improving the lives of people in developing economies using solar power.

Three sets of advances will contribute to improved standards of living relative to economics, safety and comfort.

First, more and more battery-operated appliances will make their way into the world marketplace. At CES this year, we saw battery-operated developed-market products for everything from vacuum cleaners to stoves. Once something is battery-powered, it can be easily charged. These innovations will make their way to appliances that are useful in the context of the developing world, as we have seen with home lighting. The improvement in batteries in both cost and capacity (and weight) will drive major changes in appliances across all markets.

Second, the lowering of the price of solar panels will continue, and they will become commonplace as the next infrastructure requirement. This will then make possible all sorts of improvements in schools, work and safety. One thing that can then happen is an improvement in communication that comes from high speed Wi-Fi throughout villages like the one described here. Solar can power point-to-point connectivity or even a satellite uplink. Obviously, costs of connectivity itself will be something to deal with, but we’ve already seen how people adapt their needs and use of cash flow when something provides an extremely high benefit. It is far more likely that Wi-Fi will be built out before broad-based 3G or 4G coverage and upgrades can happen.

Third, I would not be surprised to see innovations in battery storage make their way to the developing markets long before they are ubiquitous in the developed markets.

A full-sized “roof” solar panel leaning up against a clothesline. Often roof-mounting panels is structurally challenging, so it is not uncommon to see these larger panels placed nearby on the ground.

Developed markets will value batteries for power backup in case of a loss of power and solar storage (rather than feeding back to the grid). But in the developing markets, a battery pack could provide continuous and on-demand power for a home in quantity, as well as nighttime power allowing for studying, businesses and more. This is transformative, as people can then begin to operate outside of daylight hours and to use a broader range of appliances that can save time, increase safety in the home and improve quality of life.

Our industry is all about mobile and cloud. With the arrival of low-cost solar, it’s no surprise that the revolution taking place in developing markets these days is rooted in mobile-sun.

Photos by the author unless otherwise noted.

You’re doing it wrong

Smartphones and tablets, along with apps connected to new cloud-computing platforms, are revolutionizing the workplace. We’re still early in this workplace transformation, and the tools so familiar to us will be around for quite sometime. The leaders, managers, and organizations that are using new tools sooner will quickly see how tools can drive cultural changes — developing products faster, with less bureaucracy and more focus on what’s important to the business.

Smartphones and tablets, along with apps connected to new cloud-computing platforms, are revolutionizing the workplace. We’re still early in this workplace transformation, and the tools so familiar to us will be around for quite sometime. The leaders, managers, and organizations that are using new tools sooner will quickly see how tools can drive cultural changes — developing products faster, with less bureaucracy and more focus on what’s important to the business.

If you’re trying to change how work is done, changing the tools and processes can be an eye-opening first step.

Check out a podcast on this topic hosted by Andreessen Horowitz’s Benedict Evans. Available on Soundcloud or on a16z.com.

Many of the companies I work with are creating new productivity tools, and every company starting now is using them as a first principle. Companies run their business on new software-as-a-service tools. The basics of email and calendaring infrastructure are built on the tools of the consumerization of IT. Communication and work products between members of the team and partners are using new tools that were developed from the ground up for sharing, collaboration and mobility.

Some of the exciting new tools for productivity that you can use today include: Quip,Evernote, Box and Box Notes, Dropbox, Slack, Hackpad, Asana, Pixxa Perspective, Haiku Deck, and more below. This list is by no means exhaustive, and new tools are showing up all the time. Some tools take familiar paradigms and pivot them for touch and mobile. Others are hybrids of existing tools that take a new view on how things can be more efficient, streamlined, or attuned to modern scenarios. All are easily used via trials for small groups and teams, even within large companies.

Tools drive cultural change

Tools have a critical yet subtle impact on how work gets done. Tools can come to define the work, as much as just making work more efficient. Early in the use of new tools there’s a combination of a huge spike in benefit, along with a temporary dip in productivity. Even with all the improvements, all tools over time can become a drag on productivity as the tools become the end, rather than the means to an end. This is just a natural evolution of systems and processes in organizations, and productivity tools are no exception. It is something to watch for as a team.

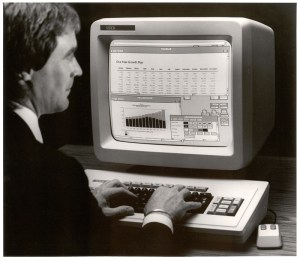

The spike comes from the new ways information is acquired, shared, created, analyzed and more. Back when the PC first entered the workplace, it was astounding to see the rapid improvements in basic things like preparing memos, making “slides,” or the ability to share information via email.

There’s a temporary dip in productivity as new individual and organizational muscles are formed and old tools and processes are replaced across the whole team. Everyone individually — and the team has a whole — feels a bit disrupted during this time. Things rapidly return to a “new normal,” and with well-chosen tools and thoughtfully-designed processes, this is an improvement.

As processes mature or age, it is not uncommon for those very gains to become burdensome. When a new lane opens on a highway, traffic moves faster for awhile, until more people discover the faster route, and then it feels like things are back where they started. Today’s most common tools and processes have reached a point where the productivity increases they once brought feel less like improvements and more like extra work that isn’t needed. All too often, the goals have long been lost, and the use of tools is on autopilot, with the reason behind the work simply “because we always did it that way.”

New tools are appearing that offer new ways to work. These new ways are not just different — this is not about fancier reports, doing the old stuff marginally faster, or bigger spreadsheets. Rather, these new tools are designed to solve problems faced by today’s mobile and continuous organization. These tools take advantage of paradigms native to phones and tablets. Data is stored on a cloud. Collaboration takes place in real time. Coordination of work is baked into the tools. Work can be accessed from a broad range of computing devices of all types. These tools all build on the modern SaaS model, so they are easy to get, work outside your firewall and come with the safety and security of cloud-native companies.

The cultural changes enabled by these tools are significant. While it is possible to think about using these tools “the same old way,” you’re likely to be disappointed. If you think a new tool that is about collaboration on short-lived documents will have feature parity with a tool for crafting printed books, then you’re likely to feel like things are missing. If you’re looking to improve your organizational effectiveness at communication, collaboration and information sharing, then you’re also going to want to change some of the assumptions about how your organization works. The fact that the new tools do some things worse and other things differently points to the disruptive innovation that these products have the potential to bring — the “Innovator’s Dilemma” is well known to describe the idea that disruptive products often feel inferior when compared to entrenched products using existing criteria.

Overcoming traps and pitfalls

Based on seeing these tools in action and noticing how organizations can re-form around new ways of working, the following list compiles some of the most common pitfalls addressed by new tools. In other words, if you find yourself doing these things, it’s time to reconsider the tools and processes on your team, and try something new.

Some of these will seem outlandish when viewed through today’s concept. As a person who worked on productivity tools for much of my career, I think back to the time when it was crazy to use a word processor for a college paper; or when I first got a job, and typing was something done by the “secretarial pool.” Even the use of email in the enterprise was first ridiculed, and many managers had assistants who would print out email and then type dictated replies (no, really!). Things change slowly, then all of a sudden there are new norms.

In our Harvard Business School class, “Digital Innovation,” we crafted a notion of “doing it wrong,” and spent a session looking at disruption in the tools of the workplace. In that spirit, “you’re doing it wrong,” if you:

- Spend more time summarizing or formatting a document than worrying about the actual content. Time and time again, people over-invest in the production qualities of a work product, only to realize that all that work was wasted, as most people consume it on a phone or look for the summary. This might not be new, but it is fair to say that the feature sets of existing tools and implementation (both right for when they were created, I believe) would definitely emphasize this type of activity.

- Aim to “complete” a document, and think your work is done when a document is done. The modern world of business and product development knows that you’re never done with a product, and that is certainly the case for documents that are steps along the way. Modern tools assume that documents continue to exist but fade in activity — the value is in getting the work out there to the cloud, and knowing that the document itself is rarely the end goal.

- Figure out something important with a long email thread, where the context can’t be shared and the backstory is lost. If you’re collaborating via email, you’re almost certainly losing important context, and not all the right folks are involved. A modern collaboration tool like Slack keeps everything relevant in the tool, accessible by everyone on the team from everywhere at any time, but with a full history and search.

- Delay doing things until someone can get on your calendar, or you’re stuck waiting on someone else’s calendar. The existence of shared calendaring created a world of matching free/busy time, which is great until two people agree to solve an important problem — two weeks from now. Modern communication tools allow for notifications, fast-paced exchange of ideas and an ability to keep things moving. Culturally, if you let a calendar become a bottleneck, you’re creating an opening for a competitor, or an opportunity for a customer or partner to remain unhappy. Don’t let calendaring become a work-prevention tool.

- Believe that important choices can be distilled down into a one-hour meeting. If there’s something important to keep moving on, then scheduling a meeting to “bring everyone together” is almost certainly going to result in more delays (in addition to the time to get the meeting going in the first place). The one-hour meeting for a challenging issue almost never results in a resolution, but always pushes out the solution. If you’re sharing information all along, and the right people know all that needs to be known, then the modern resolution is right there in front of you. Speaking as a person who almost always shunned meetings to avoid being a bottleneck, I think it’s worth considering that the age-old technique of having short and daily sync meetings doesn’t really address this challenge. Meetings themselves, one might argue, are increasingly questionable in a world of continuously connected teams.

- Bring dead trees and static numbers to the table, rather than live, onscreen data. Live data analysis was invented 20 years ago, but too many still bring snapshots of old data to meetings which then too often digress into analyzing the validity of numbers or debating the slice/view of the data, further delaying action until there’s an update. Modern tools like Tidemark and Apptio provide real-time and mobile access to information. Meetings should use live data, and more importantly, the team should share access to live data so everyone is making choices with all the available information.

- Use the first 30 minutes of a meeting recreating and debating the prior context that got you to a meeting in the first place. All too often, when a meeting is scheduled far in advance, things change so much that by the time everyone is in the room, the first half of the hour (after connecting projectors, going through an enterprise log-on, etc.) is spent with everyone reminding each other and attempting to agree on the context and purpose of the gathering. Why not write out a list of issues in a collaborative document like Quip, and have folks share thoughts and data in real time to first understand the issue?

- Track what work needs to happen for a project using analog tools. Far too many projects are still tracked via paper and pen which aren’t shared, or on whiteboards with too little information, or in a spreadsheet mailed around over and over again. Asana is a simple example of an easy-to-use and modern tool that decreases (to zero) email flow, allows for everyone to contribute and align on what needs to be done, and to have a global view of what is left to do.

- Need to think which computer or device your work is “on.” Cloud storage from Box,Dropbox, OneDrive and others makes it easy (and essential) to keep your documents in the cloud. You can edit, share, comment and track your documents from any device at any time. There’s no excuse for having a document stuck on a single computer, and certainly no excuse risking the use of USB storage for important work.

- Use different tools to collaborate with partners than you use with fellow employees. Today’s teams are made up of vendors, contractors, partners and customers all working together. Cloud-based tools solve the problem of access and security in modern ways that treat everyone as equals in the collaboration process. There’s a huge opportunity to increase the effectiveness of work across the team by using one set of tools across organizational boundaries.

Many of these might seem far-fetched, and even heretical to some. From laptops to color printing to projectors in conference rooms to wireless networking to the Internet itself, each of those tools were introduced to skeptics who said the tools currently in use were “good enough,” and the new tools were slower, less efficient, more expensive, or just superfluous.

The teams that adopt new tools and adapt their way of working will be the most competitive and productive teams in an organization. Not every tool will work, and some will even fail. The best news is that today’s approach to consumerization makes trial easier and cheaper than at any other time.

If you’re caught in a rut, doing things the old way, the tools are out there to work in new ways and start to change the culture of your team.

–Steven Sinofsky @stevesi

This article originally appeared on <re/code>.

Hiring for a job you never did or can’t do

One of the most difficult stages in growing your own skillset is when you have to hire someone for a job you can’t actually do yourself. Whether you’re a founder of a new company, or just growing a company or team, at some point the skills needed for a growing organization exceed your own experience.

One of the most difficult stages in growing your own skillset is when you have to hire someone for a job you can’t actually do yourself. Whether you’re a founder of a new company, or just growing a company or team, at some point the skills needed for a growing organization exceed your own experience.

Admitting that you don’t really have the skills the business requires is the first, and most difficult step. This is especially true as an engineer where there’s a tendency to think we can just figure things out. It is not uncommon to go through a thought process that basically boils down to: coding must be the hardest job, so all the other jobs can be done by someone with coding skills.

Fight the fear, let go of control, and make moves towards a well-rounded organization.

Nice try.

If you’ve ever tried some simple home repairs or paint touchup you know this logic doesn’t work—you only need to spend an hour watching some cable TV DiY show and you can see how the people with skills are always unraveling the messes created by those who thought they could improvise. The software equivalent can sometimes be seen as a developer attempting to design the user interaction flow in a paint program or PowerPoint. Sure it can be done by a developer (and there are talented developers who can of course do it all), but he or she can quickly reach their limits, and so will the user interaction.

Open up your engineer’s mind to embrace the truth that every other discipline or function you will ever collaborate with has a deep set of skills experiences that you lack. Relative to engineering, the “softer” skills often pose the biggest eye-opening surprise to engineers. Until you’ve seen the magic worked by those skilled in marketing, communications, sales, business development or a host of other disciplines you might not appreciate the levels of success you can achieve by turning over the task to trained professionals.

I have seen this first-hand many times. Most recently it occurred while working with the a16z portfolio company Local Motion when it came time to do some of the early announcements around the fleet-management company. The co-founders possess engineering and design backgrounds from elite institutions, and built the product themselves, hardware and software. Both are experienced mountaineers, and so they have this engrained sense of self-sufficiency, which is valuable both for building companies and scaling mountains.

When it came time to work with the industry press to tell the story of their company, in some ways they had to suppress their self-sufficient instincts. The founders were self-aware enough to know they had not done this before and agreed to enlist the help of those who have depth and breadth of experience. The pros showed up and spent time learning the team, the business, and the story (professionals do that!). They came back with a plan, roles, responsibilities, and defined what success would look like. It was amazing to watch how the founders absorbed and learned at each step all those things which they had not personally experienced before.

This sounds easy and pretty obvious. But if you put yourself in their shoes you know that this is not just bringing in a hired gun to get some press, rather this is hiring on a new member of the team and a new founding member of the family. What is vital to keep in mind, is that this kind of work is as important as every line of code and every circuit board. The lesson of letting go and letting professionals do their work is clear: delegating is never easy for most, but is spectacularly difficult if you don’t know what the other person is going to do and when the outcome matters a whole lot. Still, you need to let the specialists into your carefully engineered world.

There are moments of terror. You’re watching people talk about your product using tools and techniques you are unfamiliar with to connect with your potential customers. Even though it is a product, you are apt to feel as though this is a discussion about yourself. You question every step. You doubt the skills of the person you hired. You are certain everything will go wrong.

It is at that point—right when you start to panic and think that unless you do this yourself things will fail—that you need to let go. You need to say “yes” to hiring a person to do the work, and then let them do their best work.

Just keep reminding yourself that you’ve never done the job before, and that you’re role is to hire someone who knows more than you. Even when you’ve wrapped your head around that, there are a few ways you can get tripped up when you are The key to success here is avoiding these mistakes when you are in the hiring process:

- Asking candidates to teach you. A good candidate will of course know more than you. Their interview is not a time for them to teach you what they do for a living. The interview is for you to learn the specifics of a given candidate, not the job function. The best bet is to do your homework. If you’re hiring your first sales leader then use your network and talk to some subject matter experts and learn the steps of the role ahead of time.

- Expecting a candidate to know or create your strategy. It is fine to expect engineering candidates to know the tools and techniques you use. You wouldn’t expect an engineering candidate to know your unannounced product, of course. It is equally challenging to expect a new marketing person to have a marketing plan for your product. Even if you ask them to brainstorm for hours, keep in mind the inputs into the process—they only know the specifics you have provided them. For example, don’t expect a marketing candidate to magically come up with the right pricing strategy for your product without a chance to really dive in. On the other hand, you can expect a candidate to walk you through in extreme detail their most recent work on a similar topic. You can get to their thought process and how they worked through the details of the problem domain.

- Interviewing too many folks. You will always hear stories about the best hire ever after seeing 100 people. Those stories are legendary. On the other hand, you rarely hear the stories that start with “we could not find the perfect QA leader so we waited and waited until we had a quality crisis.” Yet these latter stories happen far too often. Again, you should not compromise, but if after bringing a dozen or more people through a process you are still searching, consider the patterns you’re seeing and why this is happening. A good practice if you’ve not found right hire after going through a lot of folks is to bring in a new point of view. Consider recruiting the help of a search firm, a board member, or a subject matter advisor to get you over the first hire in a new job function. Do you need the help of a search firm? Would you benefit from the help of a board member or subject matter advisor to get you over the first hire in a new job function?

These add up to the quest for the perfect hire. When it comes to engineering you give yourself a lot of leeway because you feel you can direct a less experienced person and because you can gauge more easily what they know and don’t know. When it comes to other roles you become more reluctant to let go of a dream candidate. This almost always nets out costing you time, and in a new effort time is money. That isn’t saying to settle, but it is saying to use the same techniques of approximation you naturally use when hiring people in your comfort zone.

The most difficult part of hiring for a job you don’t know first-hand is the human side. Every growing organization needs diversity because every product and service is used by a diverse group of people. The different job functions often bring with them diversity of personality types that add to the challenges of hiring. The highly analytical developer looking to hire a strong qualitative thinker for marketing, or the highly empathetic sales leader, is often going to face a challenge just making the human connection.

This human connection is a two-way street. Embrace it. Recognize the leap each of you are taking. Realize that the interpersonal skills required to call on customers every day are just different than the interpersonal skills used when hacking. The challenge of making that human connection is one for the person doing the hiring to overcome. Often that’s the biggest opportunity for personal growth when hiring people to do a job you can’t.

–Steven Sinofsky (@stevesi)

Note: A form of this post originally appeared on FastCo.

Why was 1984 not really like “1984”, for me

For me, 1984 was the year of Van Halen’s wonderful [sic] album, The Right Stuff, and my second semester of college. It would also prove to be a time of enlightenment for me and computing. On this 30th anniversary of the Apple Macintosh on January 25 and the Superbowl commercial on January 22. I wanted to share my own story of the way the introduction of the Macintosh profoundly changed my path in life.

For me, 1984 was the year of Van Halen’s wonderful [sic] album, The Right Stuff, and my second semester of college. It would also prove to be a time of enlightenment for me and computing. On this 30th anniversary of the Apple Macintosh on January 25 and the Superbowl commercial on January 22. I wanted to share my own story of the way the introduction of the Macintosh profoundly changed my path in life.

Perhaps a bit indulgent, bit it seemed worth a little backstory. I think everyone from back then is feeling a bit of nostalgia over the anniversary of the commercial, the product, and what was created.

High School, pre-Macintosh

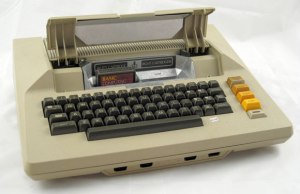

Like many Dungeons and Dragons players my age, my first exposure to post-Pong computing was an Atari 800 that my best friend was lucky enough to have (our high school was not one to have an Apple ][ which hadn’t really made it to suburban Orlando). While my friends were busy listening to the Talking Heads, Police, and B-52s, I was busy teaching myself to program on the Atari. Even though it had the 8K BASIC cartridge it lacked tape storage. Every time I went over to use the computer I had to start over. Thinking about business at an early age (I suppose) I would continue to code and refine what I thought would be a useful program for our family business, the ability to compute sales tax on purchases from different states. Enter the total sale, compute the sales tax for a state by looking up the rate in a table.

My father, an entrepreneur but hardly a technologist, was looking to buy a computer to “automate” our family business. In 1981, he characteristically dove head first into computing and bought an Osborne I. For a significant amount of money ($1,795, or $4,600 today) we owned an 8 bit CPU and two 90K floppy drives and all (five) of the business programs one could ever need.

I started to write a whole business suite for the business (inventory, customers, orders) in BASIC which is what my father had hoped I would conjure up (in between SATs and college prep). Well that was a lot harder than I thought it would be (so were the SATs). Then I discovered dBase II and something called a “database” that made little sense to me in the abstract (and would only come to mean something much later in my education). In a short time I was able to create a character-based system that would be used to run the family business.

To go to college I had a matching Osborne I with a 300b modem so I could do updates and bug fixes (darn that shipping company–they changed the rate on COD shipments right during midterms which I had hard-coded!).

College Fall Semester

I loaded up the Osborne I and my Royal typewriter/daisy wheel/parallel port “letter quality” printer and was off to sunny Ithaca.

Computer savvy Cornell issued us our “BITNET electronic mail accounts”, mine was TGUJ@CORNELLA.EDU. Equal parts friendly, memorable, and useful and no one knew what to do with them. The best part was email ID came printed on a punch card. As a user of an elite Osborne I felt I went back in time when I had to log on to the mainframe from a VT100 terminal. The only time I ever really used TGUJ was to apply for a job with Computer Services.

I got a job working for the computer services group as a Student Terminal Operator (STO). I had two 4 hour shifts. One was in the main computer science major “terminal room” in Upson Hall featuring dozens of VT100 terminals. The other shift was Friday night (yes, you read that correctly) at the advanced “lab” featuring SGI graphics workstations, IBM PC XTs, an Apple Lisa, peripherals like punch card machines, and a 5′ tall high-speed printer. For the latter, I was responsible for changing the ribbon, a task that required me to put on a mask and plastic arm-length gloves.

It turned out that Friday night was all about people coming in to write papers on the few IBM/MS-DOS PCs using WordPerfect. These were among the few PCs available for general purpose use. I spent most of the time dealing with graduate students writing dissertations. My primary job was keeping track of the keyboard templates that were absolutely required to use WordPerfect. This experience would later make me appreciate the Mac that much more.

In the computer science department I had a chance to work on a Xerox Star and Alto (see below) along with Sun Workstations, microVAX mini, and so on. The resources available were an incredible blessing to the curious. The computing world was a cacophony of tools and platforms with the vast majority of campus not yet tapping into the power of computing and those that did were using what was most readily accessible. Cornell was awash in the sea of different computing platforms, and to my context that just seemed normal, like there were a lot of different types of cars. This was especially apparent from my vantage point in the computer facilities.

One experience with a new, top-secret, computer was about to change all that.

I ended up getting to use a new computer from an unidentified company. One night after my shift, a fellow STO dragged me back to Upson Hall and took me into a locked room in the basement. There I was able to see and use a new computer. It was a wooden box attached to a wall with an actual chain. It had a mouse, which used on the Xerox and Sun workstations. It had a bitmap screen like a workstation. It had an “interface” like the Xerox. There was a menu bar across the top and a desktop of files and folders. It seemed small and much more quiet than the dorm-refrigerator sized units I was used to hearing.

What was really magical about it was that it had a really easy to use painting program that we all just loved. It had a “word processor”. It was much easier to use than the Xerox which had special keys and a somewhat overloaded desktop metaphor. It crashed a lot even after a short time using it. It also started up pretty quickly. Most everything we did with it felt new and different compared to all the other computers we used.

The end of the semester and exams approached. The few times and couple of hours I had to play with this computer were exciting. In the sea of computing options, it was definitely the most exciting thing I had experienced. Perhaps being chained to the wall added to the excitement, but there was something that really resonated with us. When I try to remember the specifics, I mostly recall an emotional buzz.

My computing world was filled with diversity, and complexity, which left me unprepared for the way the world was going to change in just the next six weeks.

Superbowl

To think about Apple’s commercial, one really has think about the context of the start of the year 1984. The Orwellian dialog was omnipresent. Of course as freshman in college we had just finished our obligatory compare/contrast the dystopian messages in Animal Farm, Brave New World, and 1984 not to mention the Cold War as front and center dialog at every turn. The country emerging from recession gave us all a contrasting optimism.

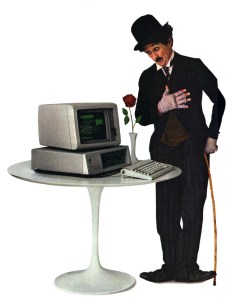

At the same time, IBM was omnipresent. IBM was synonymous with computing. Sure the Charlie Chaplin ads were great, but the image of computing to almost everyone was that of the IBM mainframe (CORNELLA was located out by the Ithaca airport). While IBM was almost literally the pillar of innovation (just a couple of years later, scientists at IBM would spell IBM with Xenon atoms), there was also great deal of distrust given the tenor of the time. The thought of a globally dominant company, a computer company, was uncomfortable to those familiar with fellow Cornellian Kurt Vonnegut’s omnipresent RAMJAC.

Then the Apple commercial ran. It was truly mesmerizing (far more so to me than the Superbowl). It took me about one second to stitch together all that was going on right before my eyes.

Apple was introducing a new computer.

It was going to be a lot different from the IBM PC.

The world was not going to be like 1984.

And most importantly, the computer I had just been playing with weeks earlier was, in fact, the Apple Macintosh.

I was so excited to head back to the terminal rooms and talk about this with my fellow STOs and to use the new Apple Macintosh.

Returning

Upon returning to the terminal room in Upson, Macs had already started to replace VT100s. First just a couple and then over time, terminal access moved to an emulation program on Macs (rumor had it that the Macs were actually cheaper than terminals!).

My Friday night shift was transformed. Several Macs were added to the lab. I had to institute a waiting list. Soon only the stalwarts were using the PCs. I started to see a whole new crowd on those lonely computer nights.

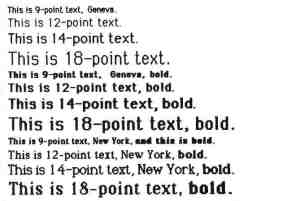

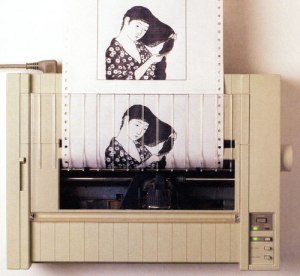

I saw seniors in Arts & Sciences preparing resumes and printing them on the ImageWriter (note, significantly easier to change the ribbon, which I had to do quite often every night). Those in the Greek System came by for help making signs for parties. Students discovered their talent with MacPaint pixel art and fat bits. All over campus signs changed overnight from misaligned stencils to ImageWriter printouts testing the limits of font faces per page.

I have to admit, however, I spent an inordinate amount of time attempting to recover documents that were lost to memory corruption bugs on the original MacWrite. The STOs all developed a great trouble shooting script and signs were posted with all sorts of guesses (no more than 4 fonts per document, keep documents under 5 pages, don’t use too many carriage returns). We anxiously awaited updates and students would often wait in line to update their “MacWrite disks” when word spread of an update (hey, there was no Internet download).

In short order, Macintosh swept across campus. Cornell along with many schools was part of Apple’s genius campaign on campuses. While I still had my Osborne, I was using Macintosh more often than not.

Completing College

The next couple of years saw an explosion of use of Macintosh across campus. The next incoming class saw many students purchasing a Mac at the start of college. Research funds were buying Macs. Everywhere you looked they were popping up on desks. There was even a dedicated store just off campus that sold and serviced Macs. People were changing their office furniture and layout to support using a mouse. Computer labs were being rearranged to support local printers and mice. The campus store started stocking floppy disks, which became a requirement for most every class.

Document creation had moved from typewriters and limited use of WordPerfect to near ubiquitous use of MacWrite practically by final exams that Spring. Later, Microsoft Mac Word, which proved far more robust became the standard.

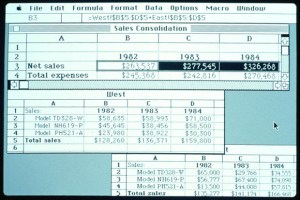

The Hotel School’s business students were using Microsoft Mac Excel almost immediately.

The Chemistry department made a wholesale switch to Macintosh. The software was a huge driver of this. It is hard to explain how difficult it was to prepare a chemistry journal article before Macintosh (the department employed a full time molecular draftsman to prepare manuscripts). The introduction of ChemDraw was a turning point for publishing chemists (half my major was chemistry).

It was in the Chemistry department where I found a home for my fondness of Macintosh and an incredibly supportive faculty (especially Jon Clardy). The research group had a little of everything, including MS-DOS PCs with mice which were quite a novelty. There were also Macs with external hard drives.

I also had access to MacApp and the tools (LightSpeed Pascal) to write my own Mac software. Until then all my programming had been on PCs (and mainframes, and Unix). I had spent two summers as an intern (at Martin Marietta, the same company dBase programmer Wayne Ratliff worked!) hacking around MS-DOS writing utilities to do things that were as easy as drag and drop on a Mac or just worked with MacWrite and Mac Excel. As fun as learning K&R, C, and INT 21h was, the Macintosh was calling.

My first project was porting a giant Fortran program (Molecular Mechanics) to the Mac. Surprisingly it worked (perhaps today, equally surprising was the existence of a Fortran compiler). It cemented the lab’s view that the Macs could also be for work, not just document creation. Next up I just started exploring the visualizations available on the Mac. Programming graphics was all new to me. Programming an object-oriented event loop seemed mysterious and indirect to me compared to INT 21h or stdio.

But within a few hacking sessions (fairly novel to the chemistry department) the whole thing came together. Unlike all of the previous systems I used, the elegance of the Mac was special. I felt like the more I used it the more it all made sense. When I would bury myself in Unix systems programming it seemed more like a series of things, tricks, you needed to know. Macintosh felt like a system. As I learned more I felt like I was able to guess how new things would work. I felt like the bugs in my programs were more my bugs and not things I misunderstood.

The proof of this was that through the Spring semester my senior year I was able to write a program that visualized the periodic table of the elements using dozens of different variables. It was a way to explore periodicity of the elements. I wrote routines for an X-Y plot, bar charts, text tables, and the pièce de résistance was a 2.5-dimensional perspective of the periodic table showing a single property (commonly used to illustrate the periodic nature of electron affinity). I had to ask a lot of friends who were taking computer graphics on SGIs for help! Still, not only had I just been able to program another new OS (by then this was my 5th or 6th) but I was able to program a graphical user interface for the first time.

MacMendeleev was born.

The geek in all of us has that special moment when at once you feel empowered and marvel at a system. That day in the spring of 1987 when I rendered a perspective drawing from my own code on a system that I had seen go from a chained down plywood box to ubiquity across campus was magical. Even my final report for the project was, to me, a work of art.

The geek in all of us has that special moment when at once you feel empowered and marvel at a system.

It wasn’t just the programming that was possible. It wasn’t just the elegance and learnability of the system. It wasn’t even the ubiquity that the Macintosh achieved on campus. It was all of those. Most of all it represented a tool that allowed me to realize some of my own potential. I was awful at Chemistry. Yet with Macintosh I was able to contribute to the department and probably showed a professor or two that in spite of my lack of actual chemistry aptitude I could do something (and dang, my lab reports looked amazing!). I was, arguably, able to learn some chemistry.

I achieved with Macintosh what became one of the most important building blocks in my education.

I’m forever thankful for the empowerment that came from using a “bicycle of the mind”.

I’m forever thankful for the empowerment that came from using a “bicycle of the mind”.

What came next

Graduate school diverged in terms of computing. We used DEC VMS, though SmallTalk was our research platform. So much of the elegance of the Macintosh OS (MacApp and Lisa before that) was much clearer to me as I studied the nuances of object-oriented programming.

I used my Macintosh II to write papers, make diagrams, and remote into the microVAX at my desk. I also used Macintosh to create a resume for Microsoft with a copy of Microsoft Word I won at an ACM conference for my work on MacMendeleev.

I also used Macintosh to create a resume for Microsoft with a copy of Microsoft Word…

When I made it to Microsoft I found a great many shared the same experience. I met folks who worked on Mac Excel and also had Macs in boxes chained to tables. I met folks who wrote some of those Macintosh programs I used in college. So many of the folks in the “Apps” team I was hired into that year grew up on that unique mixture of Mac and Unix (Microsoft used Xenix back then). We all became more than converts to MS-DOS and Windows (3.0 was being developed when I landed at Microsoft).

There’s no doubt our collective experiences contribute to the products we each work on. Wikipedia even documents the influence of MacApp on MFC (my first product contribution), which was by design (and also by design was where not to be influenced). It is wonderful to think that through tools like MFC and Visual Basic along with ubiquitous computing, Windows brought to so many young programmers that same feeling of mastery and empowerment that I felt when I first used Macintosh.